How would we know if disasters are becoming more costly due to climate change?

Part 4 of the series, making sense of trends in disaster losses

This post responds to a reader’s request for greater detail on connecting climate change and disasters. Please feel free to ask questions in the comments and I will be happy to engage. Here are Part 1, Part 2 and Part 3 in the series.

To show that disasters have become more costly because of human-caused climate change, several criteria must be met. First, there must be an actual increase in the costs of disasters. Second, there must be a detectable increase in either the frequency or intensity of weather events which are associated with the disasters. Such an increase must be on time scales of decades or longer. Third, the detected increase in frequency or intensity must be attributed to human causes, typically defined narrowly in terms of greenhouse gas emissions, but other causes are also possible.

This framework of detection and attribution comes directly from the Intergovernmental Panel on Climate Change (IPCC) and it is exactly the same framework which underpins our understandings of the role of greenhouse emissions in increasing global average temperatures.

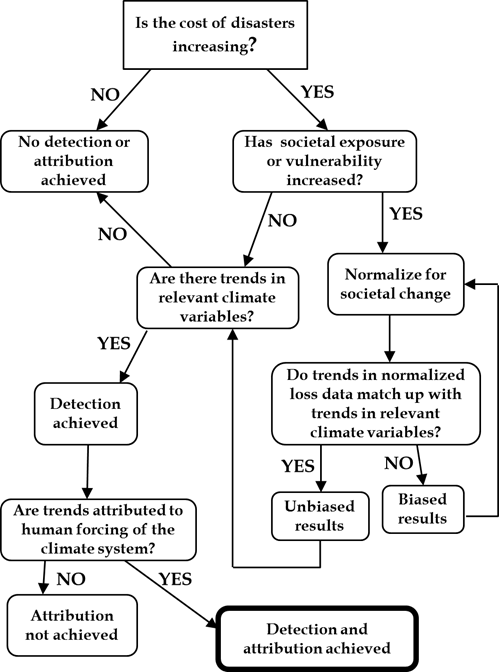

This post provides a simple flowchart below, which illustrates the necessary and sufficient conditions for the detection and attribution of a role for human-caused climate change in the increasing costs of disasters, based on the framework and concepts of the IPCC. The flow chart also incorporates the concept of “normalization” which was introduced in Part 1 of this series.

To work your way through the flow chart, first ask if the costs of disasters are increasing. If no, then obviously there are no trends detected and nothing to attribute. If yes, then the next question to ask focuses on societal exposure and vulnerability. A disaster only occurs when an extreme event meets and exposed and/or vulnerable society.

If societal conditions have indeed changed — such as you can see below for Miami Beach over a century — then it is necessary to normalize economic losses to reflect the greater exposure and/or vulnerability. A hurricane that struck Miami Beach in 1926 would cause much less damage than the exact same hurricane today. The increased loss total would be entirely due to more people, more property and more wealth. Normalization methods seek to recognize those societal changes in losses.

Next, it is necessary to look at trends in actual weather and climate variables. This is for two reasons. First, if a normalization is unbiased — meaning that it has not left out important factors — then the trends observed in the normalized loss time series should match up consistently with trends in the weather or climate phenomena that contribute to losses. You can see an example of such a consistency check in our work on normalized U.S. hurricane losses, where trends match up well on various time scales. If trends do not match up, then the normalization methods probably need more work.

A second reason is that for increasing disaster losses to be attributed to climate change, then logically there must be more or more intense weather or climate events associated with the disasters. If there are detectable increasing trends and these trends have been attributed to climate change, then — Voila! — the increase in disaster losses has been attributed to climate change.

Here at The Honest Broker I spend a lot of time looking look at actual research and data on trends in disaster losses, overall and for specific phenomena. I won’t repeat those analyses here, but you can have a look at some of these analysis in my popular series on “what the media won’t tell you about . . . “.

A last important point for today — it is important to acknowledge that some researchers have decided to abandon the IPCC framework of detection and attribution in favor of an alternative approach, called single-event attribution.

Single-event attribution uses climate models to calculate the odds that a particular extreme event was made more likely as a direct and attributable consequence of human-caused climate change. Such studies generally look at two scenarios, one a counterfactual based on no increase in greenhouse gas concentrations in the atmosphere and the other with observed increased concentrations. Then, models run under the two different scenarios are compared to see if the probability of extreme events similar to the one in question became more likely in the model runs with more greenhouse gases.

While such studies are intellectually interesting, they are also deeply problematic. One should be suspicious of any study that claims, say, that a particular flood was made more likely (or more intense) yet the empirical record shows no long-term increase in floods or their intensity in that region. If you claim after observing one roll of a die that it is loaded such that more sixes will appear, then if I am to believe you it helps to be able to show that in a long sequence of recent rolls more sixes actually appear than would otherwise be expected.

The abandonment of the IPCC framework for detection and attribution with respect to extreme events can also look like a political strategy in the face of the inability of conventional IPCC methods to either detect or attribute a signal of human-caused climate change in the historical record of most types of weather extremes.

The use of highly uncertain and malleable methods with essentially no predictive skill to associate essentially any extreme event to climate change can certainly help to generate headlines and to support advocacy. As one such study concluded of the flexible methods of single-event attribution:

“any event attribution statement can—and will—critically depend on the researcher’s decision regarding the framing of the attribution analysis, in particular with respect to the choice of model, counterfactual climate, and boundary conditions”

Recall the “garden of forking paths.”

Philosopher of biology Elisabeth Lloyd and science historian Naomi Oreskes suggest political motivations lie behind abandoning the IPCC detection and attribution framework in favor of the alternative approach to counter “contrarian claims”:

“The traditional risk‐based approach to extreme event [detection and attribution under the IPCC] may lead to a challenge in communication, and to the impression that climate science is less epistemically secure than it actually is.... Because no event can be attributed to climate change without an attribution study, this effectively means that scientists following community norms will nearly always convey the message that individual events are not related to climate change—or at least, that we cannot say if they are. In short, it conveys the impression that we just do not know, which feeds into both contrarian claims that climate science is in a state of high uncertainty, doubt, or incompleteness, and the general tendency of humans to discount threats that are not imminent.”

The rise of “event attribution” studies offers science-like support to those focused on climate advocacy, but it is not clear that they offer much in the way of empirical rigor, particularly as compared to the IPCC detection and attribution framework. As one climate scientist wryly observes of such methods, “It is important to appreciate that being quantitative is not necessarily the same thing as being rigorous.”

Because counterfactual model-based studies for individual event attribution are easy to perform, malleable in their design, well-suited for headlines, and meet a political demand for associating disasters with climate change, they are surely here to stay. I’ll have a more detailed post specifically on this alternative approach in coming weeks and how to be an educated consumer of them.

“The abandonment of the IPCC framework for detection and attribution with respect to extreme events can also look like a political strategy in the face of the inability of conventional IPCC methods to either detect or attribute a signal of human-caused climate change in the historical record of most types of weather extremes.” Because there is no major negative effect of CO2 on the climate. Just higher plant growth. That’s about it.

"The use of highly uncertain and malleable methods with essentially no predictive skill to associate essentially any extreme event to climate change can certainly help to generate headlines and to support advocacy."

"As one climate scientist wryly observes of such methods, “It is important to appreciate that being quantitative is not necessarily the same thing as being rigorous.”

Indeed, Roger. Broadly speaking, that's how we got to the present lay of the land.